In December 2023, the European Union Parliament and EU Council reached a provisional agreement on the EU Artificial Intelligence Act. This sets the stage for finalizing and implementing the law, which uses a tiered approach to AI in which requirements are based on the level of risk a system poses. The EU AI Act would be the first wide-ranging AI regulation of its kind. This article looks at the framework of the EU AI Act and how it might affect businesses.

What is the EU AI Act?

According to a press release from the European Parliament, the EU AI Act “aims to ensure that fundamental rights, democracy, the rule of law and environmental sustainability are protected from high-risk AI while boosting innovation and making Europe a leader in the field.”

That seems like a tall order. To unpack that a bit, the EU AI Act would establish limits on what AI systems are able to do based on the level of risk they present. The act would ban certain AI activities like systems used to exploit the vulnerabilities of people, such as age, disability, or social or economic situation. For other levels, AI systems would be subject to transparency requirements so people know they’re dealing with a machine.

This article highlights the main framework of the EU AI Act.

Who’s affected by the EU AI Act?

There are multiple answers to this question.

Most broadly, the EU AI Act will affect EU citizens generally. The proponents of the act are touting its safeguards for users. For example, in the press release from Parliament, co-rapporteur Dragos Tudorache, stated, “The EU is the first in the world to set in place robust regulation on AI, guiding its development and evolution in a human-centric direction.”

More specifically, the law will affect AI systems, including companies that create them, companies that incorporate them into their products and services, and more. Further, certain obligations apply to specific industries, such as insurance and banking sectors and AI systems used to influence the outcome of elections. On a broader level, however, part of what is notable about the act is its breadth, as it would apply to AI systems across industry sectors.

Third, it is important to look at the EU AI Act through the lens of who must comply. Naturally, this includes companies within the EU that provide AI systems. But the act also would apply to companies outside the EU that place AI systems on the EU market and providers and “deployers” of AI systems outside the EU if the system’s output is used in the EU. That is, the effects of the EU AI Act will be felt well outside the EU.

What are the different risk levels in the EU AI Act and what do they mean for your business?

The structure of the EU AI Act is based on four levels of risk: unacceptable, high, limited, and minimal risk. Each level includes corresponding responsibilities. The act would cover a variety of types of risks, in areas ranging from environmental to democracy. Companies should consider whether their activities fit into one of these categories to determine next steps.

The risk levels are explained briefly below:

- Minimal: It appears that many companies using or providing AI systems may not have obligations under the act. According to one press release from Parliament, “The vast majority of AI systems fall into the category of minimal risk.” These types of AI systems include spam filters, recommender systems, and the like. While the low-risk systems get a “free pass,” they may be subject to voluntary measures like codes of conduct.

- Limited: Limited or specific transparency risk appears to be a loose umbrella category for systems like chatbots that interact with humans, some emotion recognition and biometric categorization systems, and deep fakes. (Note, however, that emotion recognition and biometric categorization systems also can be assigned a higher risk level, depending on the use.) Systems in this category would be subject to certain transparency obligations so that people know they are interacting with a machine and/or that these systems are being used.

- High: High-risk AI systems are classified “due to their significant potential harm to health, safety, fundamental rights, environment, democracy and the rule of law.” High-risk systems include systems used as safety components or under EU health and safety harmonization legislation, as well as systems used in specific areas, namely: remote biometrics; critical infrastructure; education and vocational testing; employment, worker management, and access to self-employment; access to enjoyment of essential private services and public services and benefits; law enforcement; migration, asylum, and border control management; and administration of justice and democratic processes. Systems in the high-risk category are subject to a number of requirements, for example, risk mitigation, detailed documentation, human oversight, cybersecurity, and more.

- Unacceptable risk: Finally, the EU AI Act would ban a number of specific activities by AI systems (with some law enforcement exemptions):

- Biometric categorization using sensitive characteristics like political, religious, philosophical beliefs, sexual orientation, and race;

- Untargeted scraping of facial images from the internet or closed-caption TV footage to create facial recognition databases;

- Emotion recognition in the workplace and educational institutions;

- Social scoring based on social behavior or personal characteristics;

- AI systems that “manipulate human behavior to circumvent their free will”; and

- AI used to exploit people’s vulnerabilities (due to age, disability, social or economic situation).

Notably, the EU AI Act will not affect AI systems used solely for military or defense purposes, or research and innovation. It also does not apply to people using AI for non-professional reasons.

How will the EU AI Act reshape intellectual property rights?

Using copyrighted material to train AI has been a high-profile issue lately, with several well-publicized lawsuits by artists and writers against AI companies. The EU AI Act addresses this issue. General-purpose AI (“GPAI”) must comply with measures that include following EU copyright law and providing detailed summaries about the content it uses for training materials. For copyright owners who have opted out of making their data available for text and data mining, GPAI also must comply with the opt-out.

Another transparency requirement that could have IP implications is the requirement to label deep fakes.

Of course, any sweeping law that affects technology will also affect IP rights and development. This can include everything from requirements about disclosures and contracts about systems and processes to the testing conditions for high-risk AI.

What are the penalties under the EU AI Act?

Penalties for noncompliance can vary from 7.5 million Euros or 1.5% of global turnover to 35 million Euros or 7% of global turnover, depending upon the offense and size of the company.

When will the EU AI Act come into effect?

With the recent provisional agreement, the process appears to be nearing completion. Some suspect that the act may be finalized by the time of Parliamentary elections in June 2024.

Two versions of an unofficial copy of the text of the law have been leaked online. Reports on the leaked unofficial copy state that the law will go into force on the twentieth day after publication in the EU Official Journal. However, its provisions would not be applicable for some time after. Reportedly, the bans on “unacceptable risk” activity would be applicable six months after the law goes into force, but it may be up to thirty-six months for the requirements for high-risk AI systems discussed above.

How to leverage AI to protect intellectual property online

The EU AI Act was first proposed in 2021. Now that we have a clearer picture of what the law will look like when finalized, and possibly even a timeline, it is time for companies to consider AI systems that they use and offer.

AI and intellectual property are often intertwined, with the increasing prevalence of AI systems highlighting the potential risks for IP owners. AI also can be a tool to protect IP, with AI systems available to assist with infringement detection and enforcement.

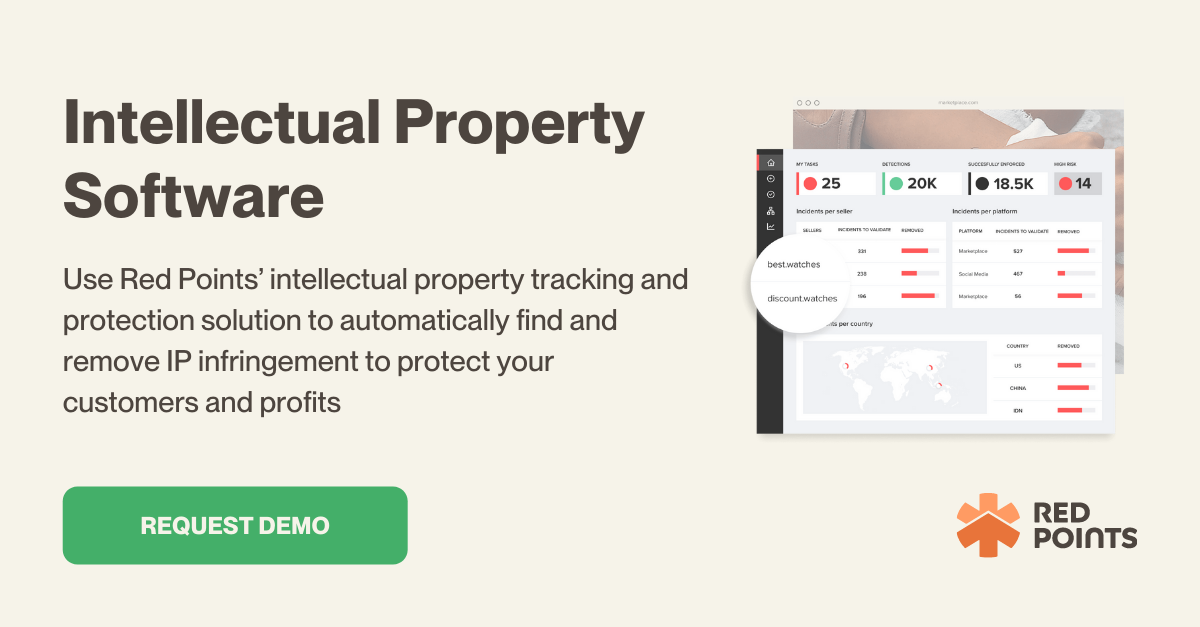

Red Points’ Intellectual Property Software offers automated tools for tracking and protecting intellectual property. It uses AI-driven techniques like bot-powered searches, image recognition, and machine learning to detect IP infringements across various online platforms. The software streamlines the enforcement process, efficiently handling potential infringements, and providing detailed analytics and reports. It’s designed to mitigate revenue loss, protect brand reputation, and make the enforcement process more efficient and cost-effective.

What’s next

In light of the EU AI Act, businesses need to reassess their AI strategies, particularly for intellectual property (IP) protection. With the Act’s focus on transparency and EU copyright law compliance, companies need to align their AI usage accordingly. Red Points offers AI-driven solutions tailored for this new regulatory landscape, ensuring effective IP protection while adhering to legal standards. To understand how Red Points can specifically aid your business in navigating these changes, request a demo and take a proactive step towards future-proofing your IP strategy.